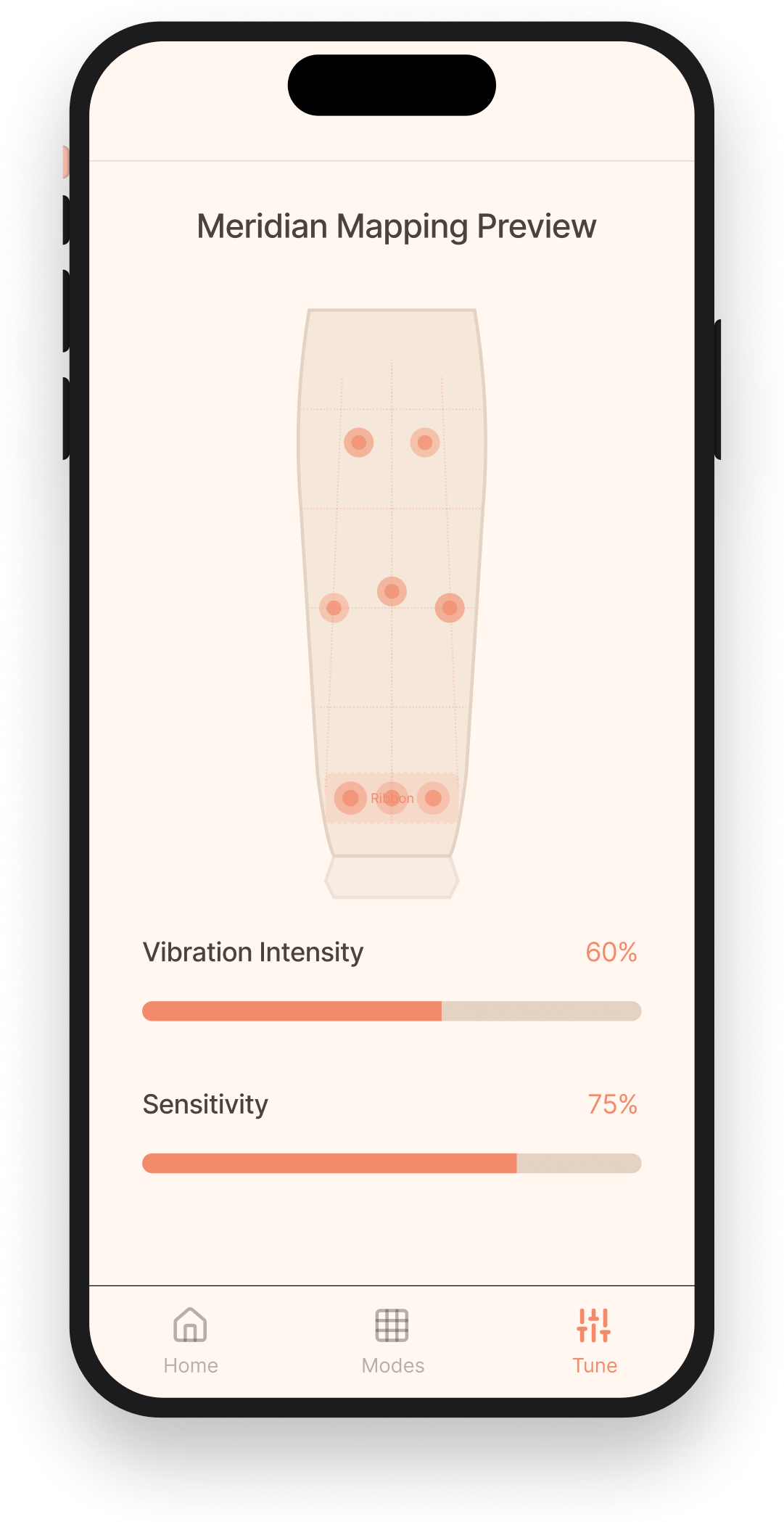

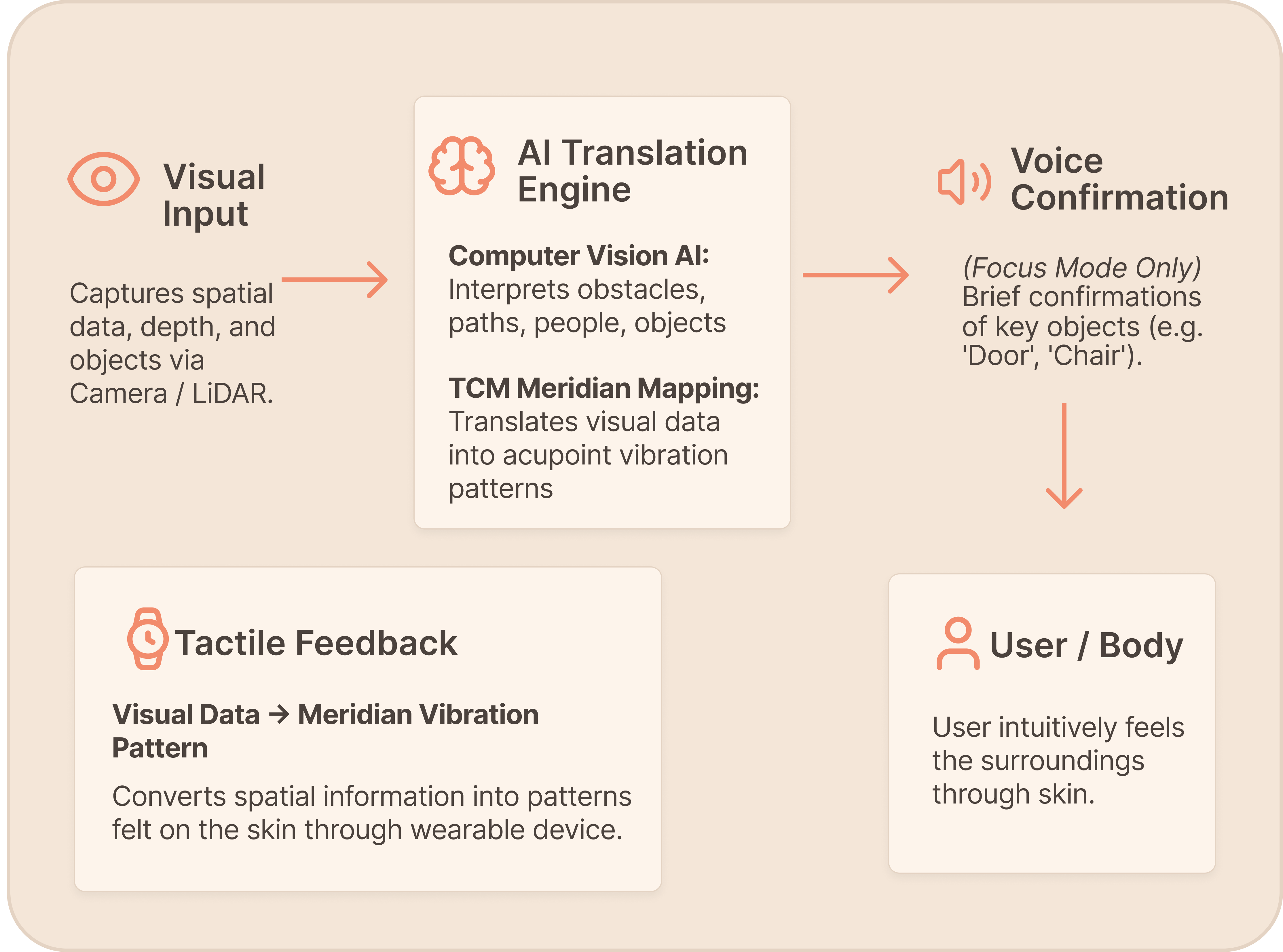

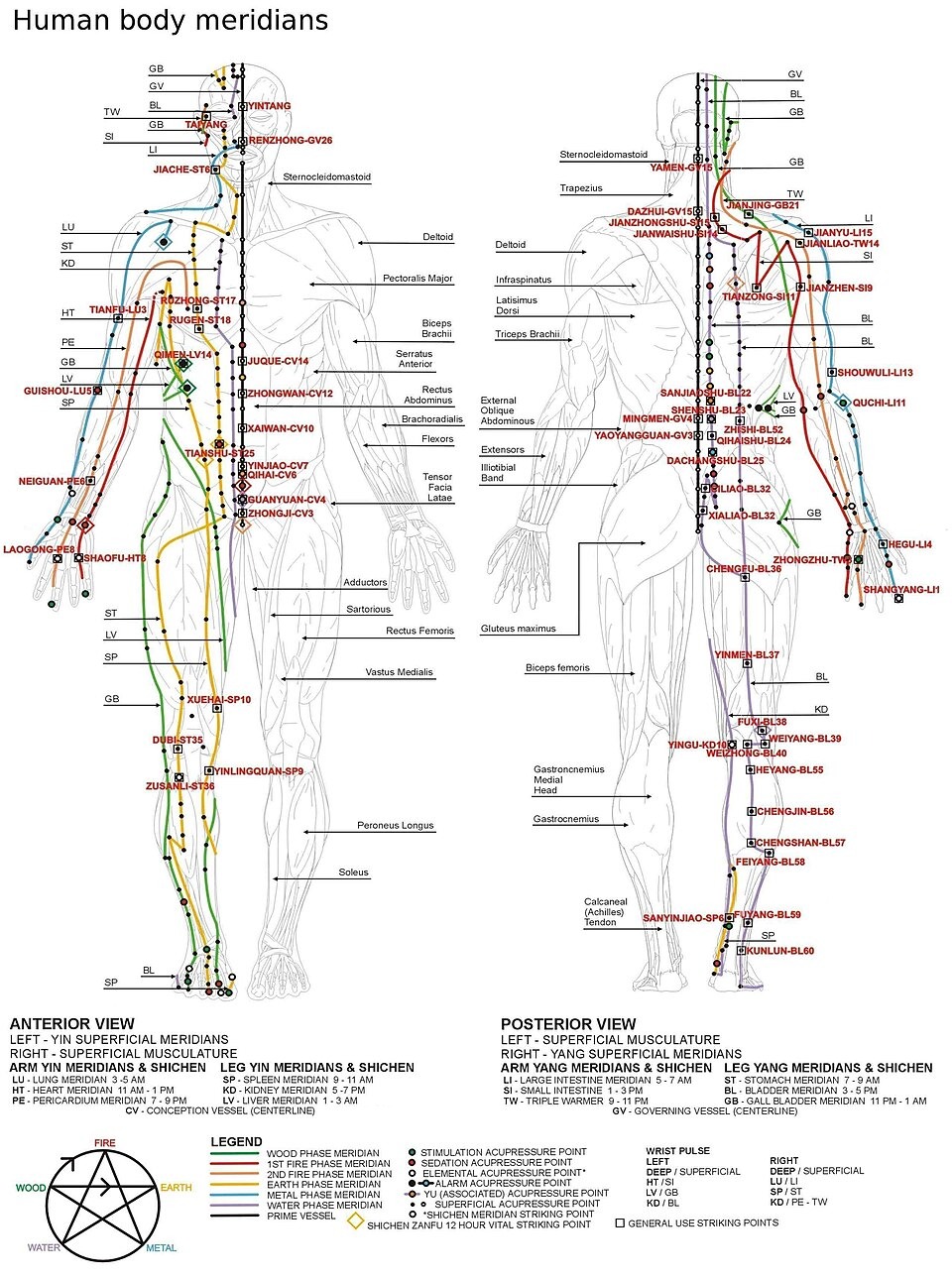

Why TCM? The Logic of Layout

Anatomical Sensitivity

Acupoints like Neiguan (PC6) sit on high nerve density areas. Mapping vibrations here ensures clearer feedback and higher tactile acuity.

Cognitive Linear Flow

The brain tracks continuous lines easier than random dots. Meridians act as a natural "highway" for information, reducing cognitive load.

Somatic Calm

Stimulating the Pericardium Meridian aligns with calming the heart. It turns the device into an emotional regulator, not just a navigation tool.

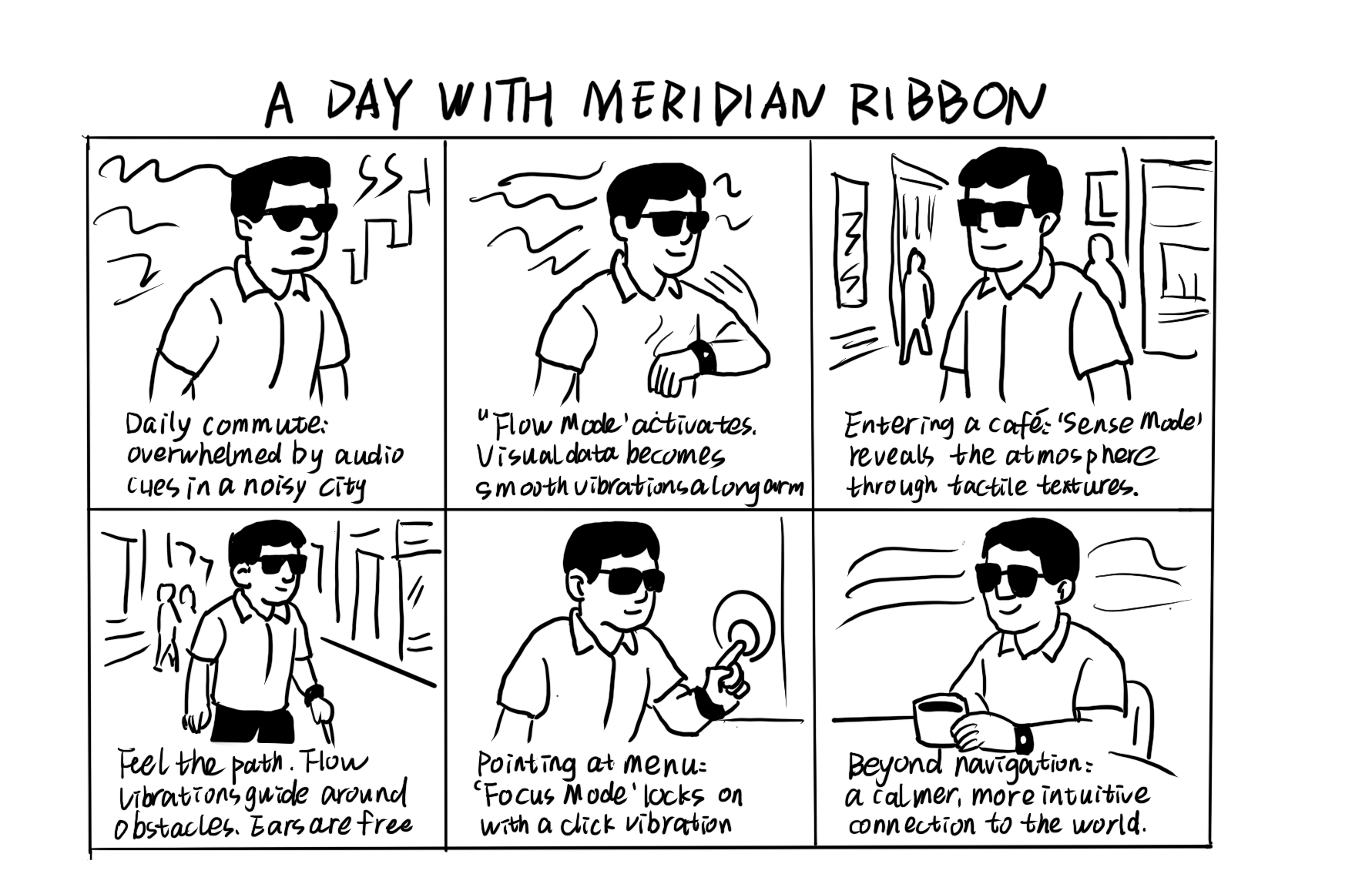

Insights from Interviews

"I can't form a map of the room — everything feels scattered."

Blind users shared that their biggest challenge is not the lack of information, but the lack of structure. They need spatial feedback that is continuous, directional, and quiet, not fragmented descriptions.

"Meridians are the body's natural pathways for sensing direction."

A TCM practitioner explained that the wrist and forearm meridians behave like an embedded spatial coordinate system. Sequential tactile stimulation along these lines creates a clear sense of flow and direction, even without vision.

Core Insight

Blind users need structured, directional, silent spatial cues — and meridian pathways offer a natural framework for delivering them.